Understand Claude Code's Impact on Delivery

Jellyfish gives you full visibility into Claude Code adoption, agentic activity, and delivery outcomes across your engineering org so you can evaluate what’s working, guide enablement, and scale with evidence.

Measure Claude Code Adoption

Get a clear picture of adoption across teams and workflows with system-derived signals that require no manual tracking.

Quantify Agentic Impact

Get clear visibility into how autonomous coding workflows affect throughput, quality, and team velocity as your org enters the agentic era.

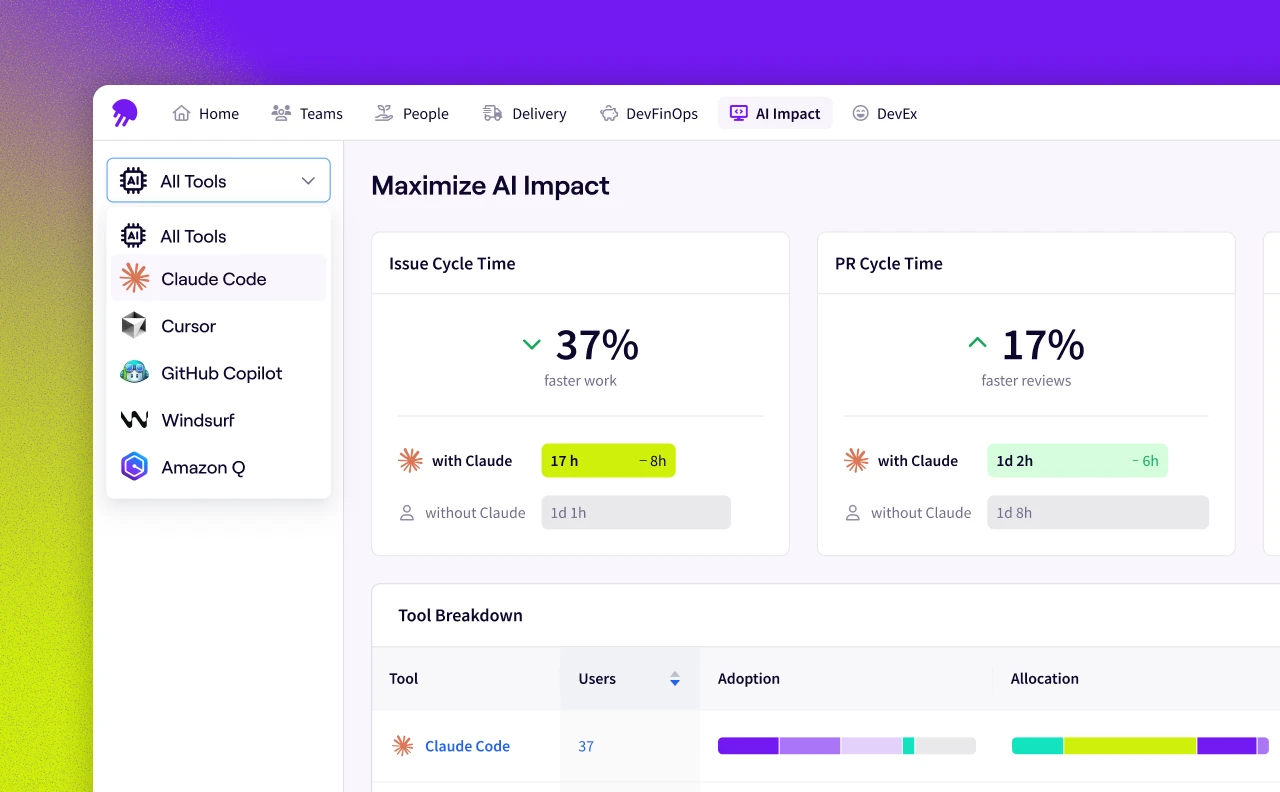

Validate Claude Code ROI

Tie Claude Code usage to delivery outcomes so leadership can see the return, not just the spend.

“The level of visibility Jellyfish provides transformed AI from an experimental tool into a proven engine for business growth. Our teams are shipping code faster and delivering twice the value to our customers in half the time.”

Tom Osowski

Engineering Manager at TaskRabbit

Everything You Need to Evaluate Claude Code's Impact

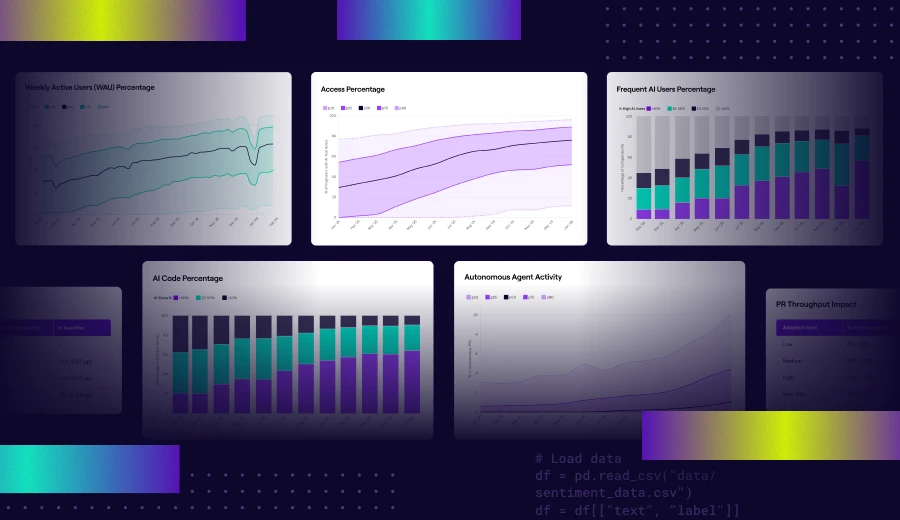

Adoption Metrics

Track how Claude Code usage grows across teams and repositories. Spot inflection points, identify stalls, and understand the pace of adoption at a glance.

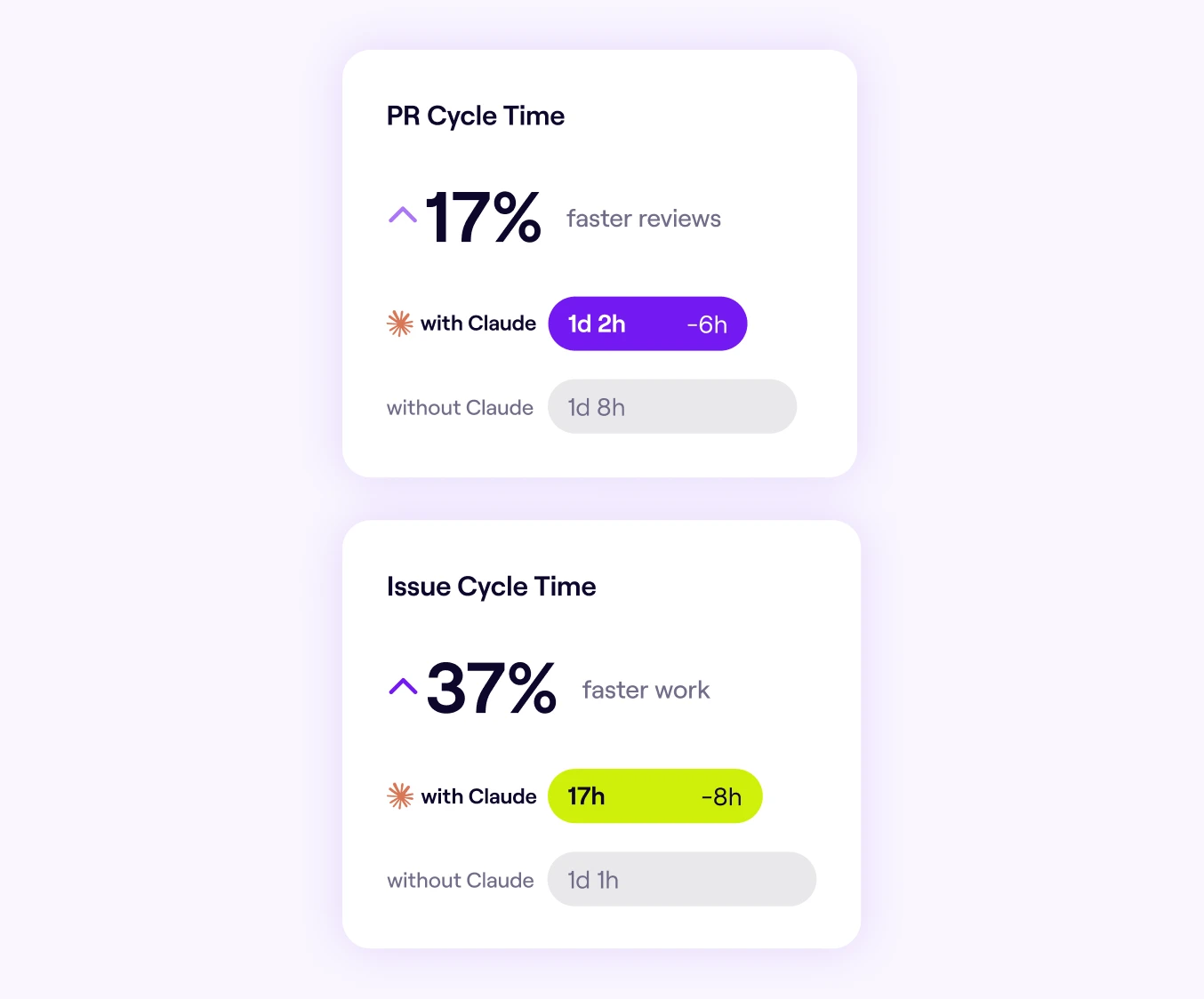

Delivery Outcome Signals

Link Claude Code usage to cycle time, throughput, and PR velocity. Understand whether agentic workflows are accelerating delivery or introducing friction.

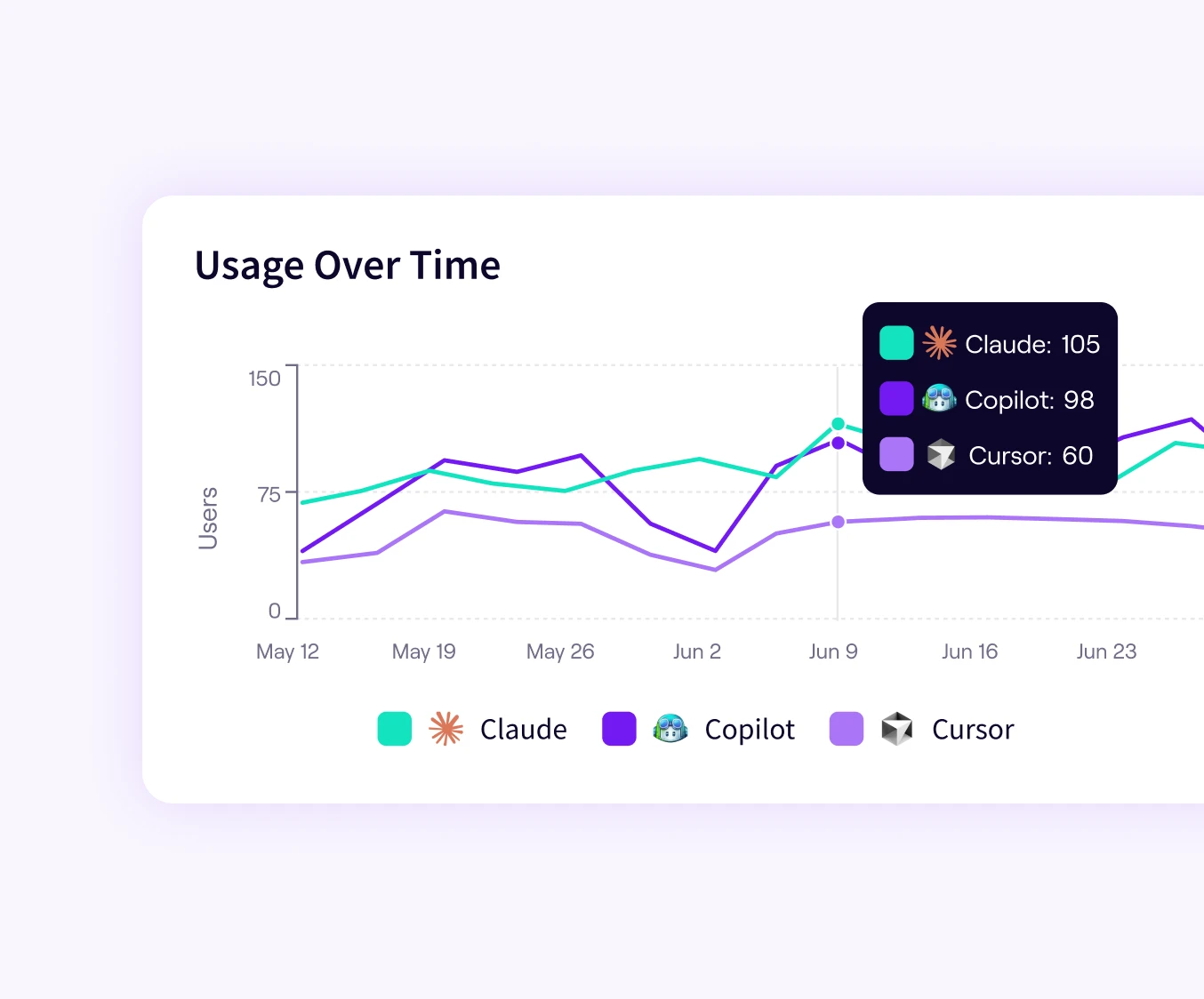

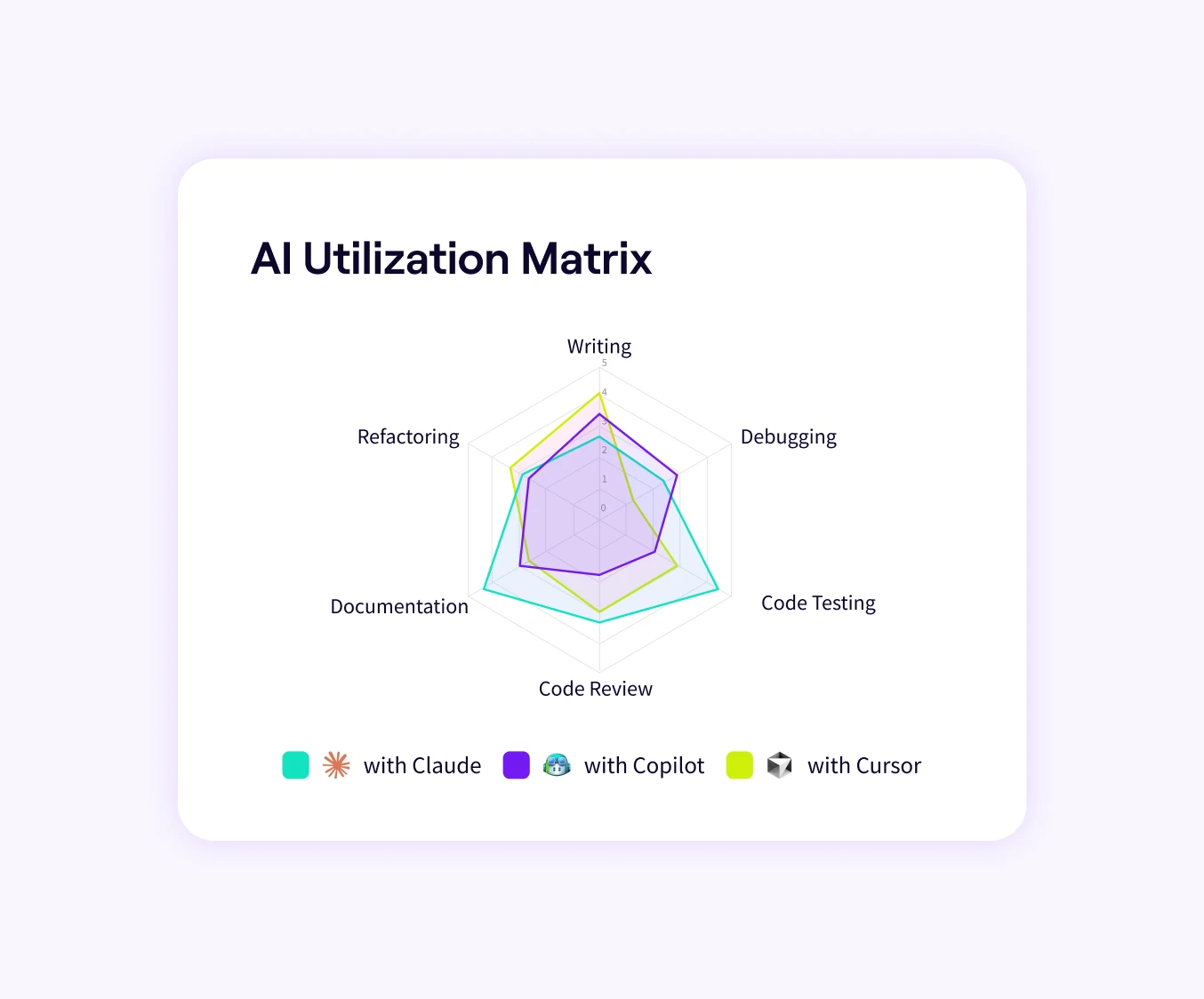

Claude Code in Context

Benchmark it against GitHub Copilot, Cursor, and others using a consistent, objective measurement model. See how it performs relative to the rest of your stack.

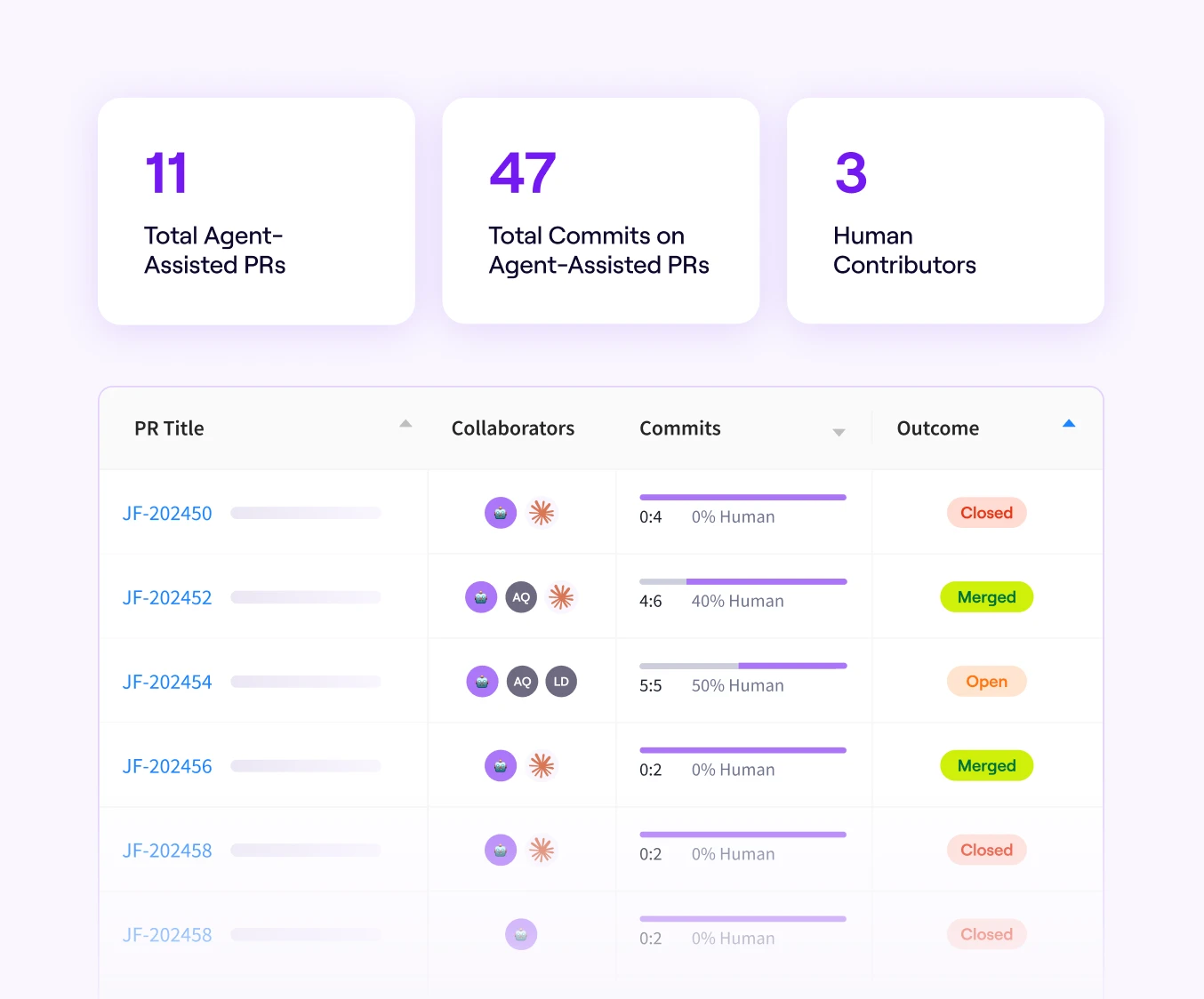

Agentic Workflow Insights

See where Claude Code’s autonomous capabilities are contributing to development output. Get clarity on how agent-driven work compares to human-assisted workflows across your org.

Integrate Your Full AI Tech Stack

Unify insights across assistants, review agents, and emerging systems without changing existing workflows.

AI Impact Research

In-depth frameworks and guidance to support adoption, measurement, and scaling of AI.