In this article

What are DORA metrics?

What are DORA metrics?

DORA metrics help engineering teams make data-driven decisions in order to continuously improve practices, deliver software faster, and ensure that it remains reliable. The four metrics are:

“You can’t improve what you don’t measure.” It’s a maxim to live by when it comes to DevOps. Making DevOps measurable is key for being able to know and invest in what processes and tooling works, and fix or remove what doesn’t. DORA metrics have become the gold standard for teams aspiring to optimize their performance and achieve the DevOps ideals of speed and stability.

What is DORA?

What is DORA?

The DevOps Research and Assessment (DORA) team is a research program that was acquired by Google in 2018. Their goal is to understand the practices, processes, and capabilities that enable teams to achieve high performance in software and value delivery. From 2014 until 2019, the group published their best known studies, a series of annual reports to benchmark DevOps practices, answer questions common to many DevOps teams, and ultimately provide guidance for how DevOps teams can continuously improve their processes and capabilities.

In their 2018 book, Accelerate, the DORA team identified a set of metrics which they claim indicates software teams’ performance as it pertains to software development and delivery capabilities. Change Lead Time, Deployment Frequency, Mean Time to Resolution, and Change Failure Rate. These metrics have come to be known as DORA metrics.

The SEI Maturity Model

Benchmark your team across an objective set of nine capabilities including Roadmap Planning & Execution, R&D Impact, Capital Efficiency, Resource Fungibility and more.

Download the SEI Maturity ModelThe 4 DORA Metrics

The 4 DORA Metrics

The DORA group found that elite performing software teams – those who deliver the most value, fastest, and most consistently – will optimize for four metrics in particular:

Change Lead Time

Change lead time measures the total time between when work on a change request is initiated to when that change has been deployed to production and thus delivered to the customer. Lead time helps you understand how efficient our development process is. Long lead times may be the result of some inefficient process or bottleneck along the development or deployment pipeline.

The most common way of measuring lead time is by comparing the time of the first commit of code for a given issue to the time of deployment. A more comprehensive (though also more difficult to pinpoint) method would be to compare the time that an issue is selected for development to the time of deployment.

Deployment Frequency

Deployment Frequency measures how often a team pushes changes to production. This indicates how quickly your team is delivering software – your speed. DORA tells us that high performing teams endeavor to ship smaller and more frequent deployments. This has the effect of both improving time to value for customers and decreasing risk (smaller changes mean easier fixes when changes cause production failures) for the development team.

Change Failure Rate

Change Failure Rate is a measurement of the rate at which production changes result in incidents, rollbacks, or failures. This tells you the quality of code you are pushing to production. The lower the rate here the better (higher performing teams have a change failure rate of 0-15%), but the ultimate goal of the team here should be to decrease the change failure rate over time as skills and processes improve.

The trickiest piece for most teams is in defining what a failure is for the organization. Too broad or too limiting of a definition and you will encourage the wrong behaviors. In the end, the definition of failure is and needs to be unique to each organization, service, or even team.

Mean Time to Recovery (MTTR)

MTTR is about resolving incidents and failures in production when they do happen. It is the measurement of the time from an incident having been triggered to the time when it has been resolved via a production change. The goal of optimizing MTTR of course is to minimize downtime and, over time, build out the systems to detect, diagnose, and correct problems when they inevitably occur.

Using DORA metrics to improve your DevOps practices

Using DORA metrics to improve your DevOps practices

As an engineering leader, you are in the position to empower your teams with the direction and the tools to succeed. By measuring and tracking DORA metrics and trends over time, developers, teams, and engineering leaders can make more informed decisions about what needs to be improved and how to make improvements to the development process. When your DORA metrics improve, you can be confident that you’ve made good decisions to enable your team, and that you are delivering more value to your customers.

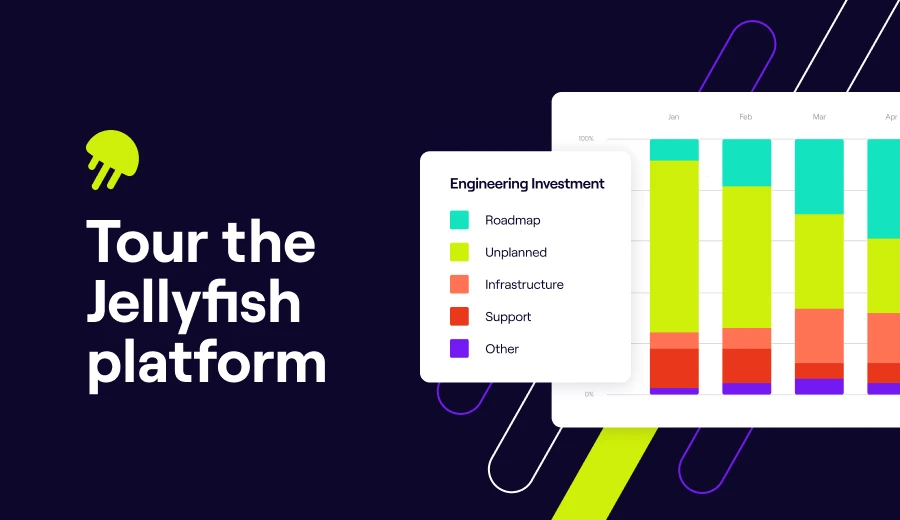

Interested in learning more about Jellyfish?

See how Jellyfish helps teams measure DORA metrics.

Tour the ProductAbout the author

Lauren is Senior Product Marketing Director at Jellyfish where she works closely with the product team to bring software engineering intelligence solutions to market. Prior to Jellyfish, Lauren served as Director of Product Marketing at Pluralsight.