Jellyfish vs. LinearB

9 out of 10 engineering teams choose Jellyfish over LinearB after a direct evaluation. The reason? Jellyfish doesn’t just surface metrics. It tells you what to do with them. From AI impact measurement and delivery forecasting to developer experience insights, Jellyfish gives engineering leaders the clarity to act, not just the data to observe.

See how we compare

See how we compare

Engineering Management

DORA & productivity metrics

Team goals, Slack & Email alerts

Custom analysis and APIs

AI-powered chat assistant

Industry benchmarking

Team-level comparison

Resource and investment allocation

R&D capacity planning

Delivery forecasting & scenario planning

Deliverables status tracking and reporting

Board-ready executive dashboards

AI Impact

Adoption insights based on system data

Connects AI usage to resource allocation

Integrations with major tools (Cursor, Copilot, etc.)

Multi-tool comparison

Usage data linked to delivery metrics

AI Impact Surveys with AI NPS

AI-generated Exec Reports with ROI metrics

Code review agent insights

Developer Experience

Qualitative developer experience surveys

DevEx metrics

Financial Reporting

Cost capitalization reporting

SOC‑1 Type II financial compliance

100% audit pass rate

Administration & Security

SOC-2 Type II compliance

No source code access required

Fully self‑service configuration

Role Based Access Controls

Group Based Access Controls

SSO

Automated data model (no manual repo config)

Embedded services

Low cost of maintenance

SCIM

“DX and LinearB don’t treat team-based metrics or person-based metrics as first-class citizens like Jellyfish does.”

Jane Hatfield

Director of Engineering at Jane.app

“LinearB didn’t provide the breadth of metrics from board level down to IC that Jellyfish does.”

Adam Llewlyn

Program Delivery Lead at Cyara

“We were looking for the ability to see metrics for engineering leaders and at the VP/Product level. LinearB’s UI was very clunky.”

Xaviar Steavenson

VP of engineering at WebPros

Where LinearB falls short, Jellyfish goes deeper

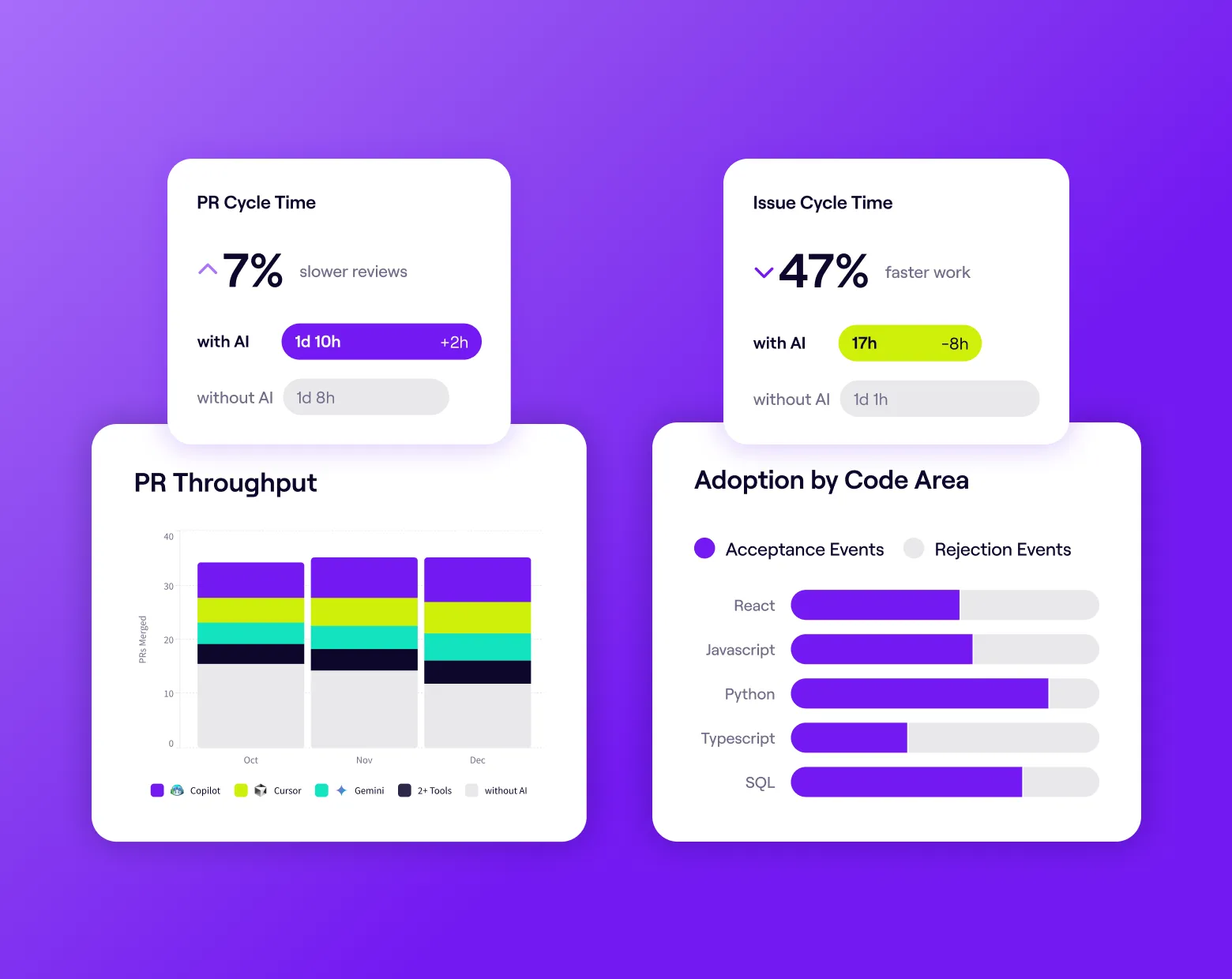

Better Visibility into AI Transformation

Move beyond seat counts to real productivity metrics. Jellyfish ingests signals from AI coding assistants and code review agents to measure Issue and PR Cycle Time lift.

Compare Power Users to Idle Users and benchmark against 20M+ PRs to prove your AI investment is working.

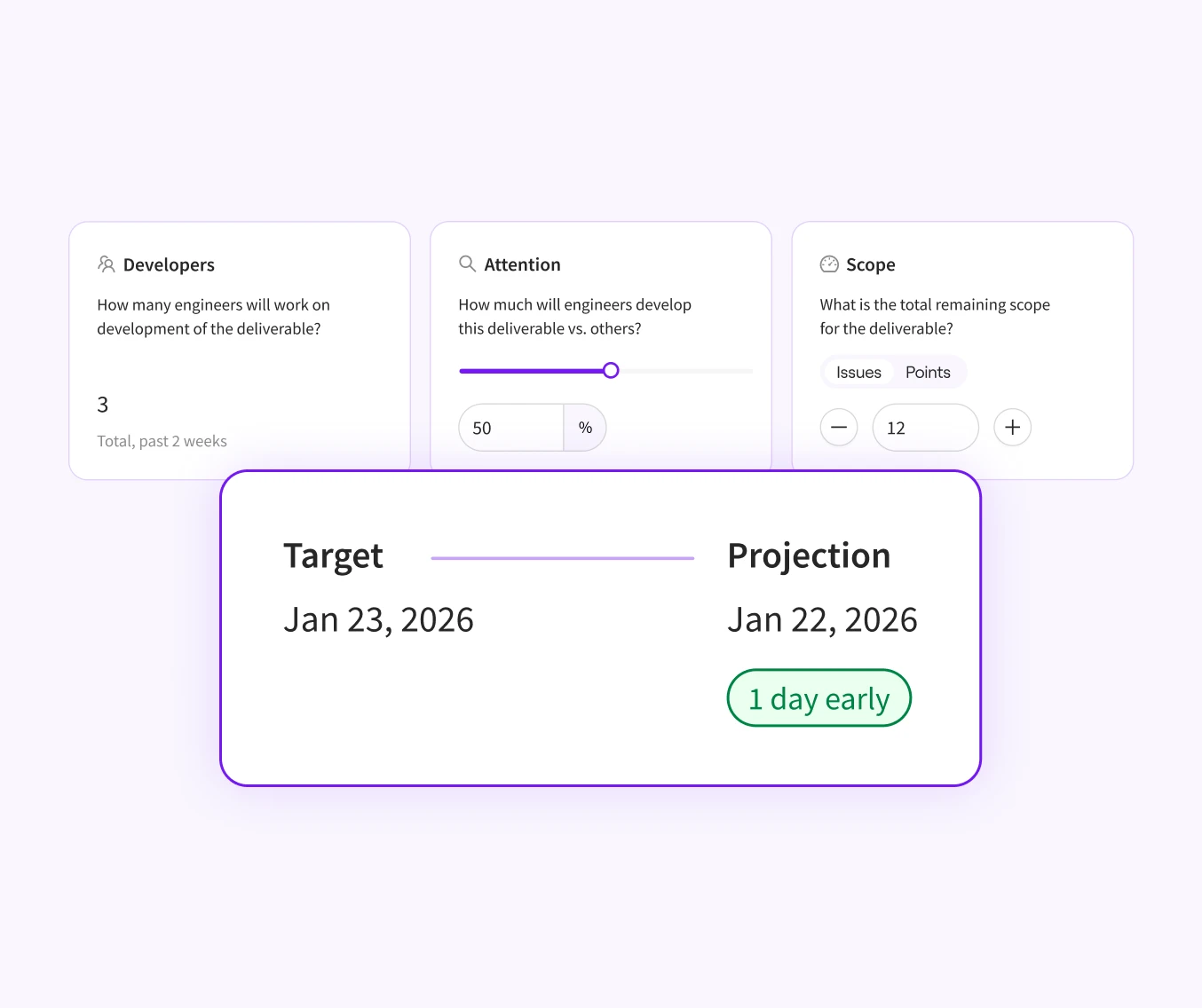

Increase Delivery Predictability

Stop guessing at ‘done.’ Jellyfish uses historical SCM and issue tracking signals to project completion dates.

Model scenarios to see how headcount or scope changes impact delivery in real time, and make trade-offs before you miss a deadline.

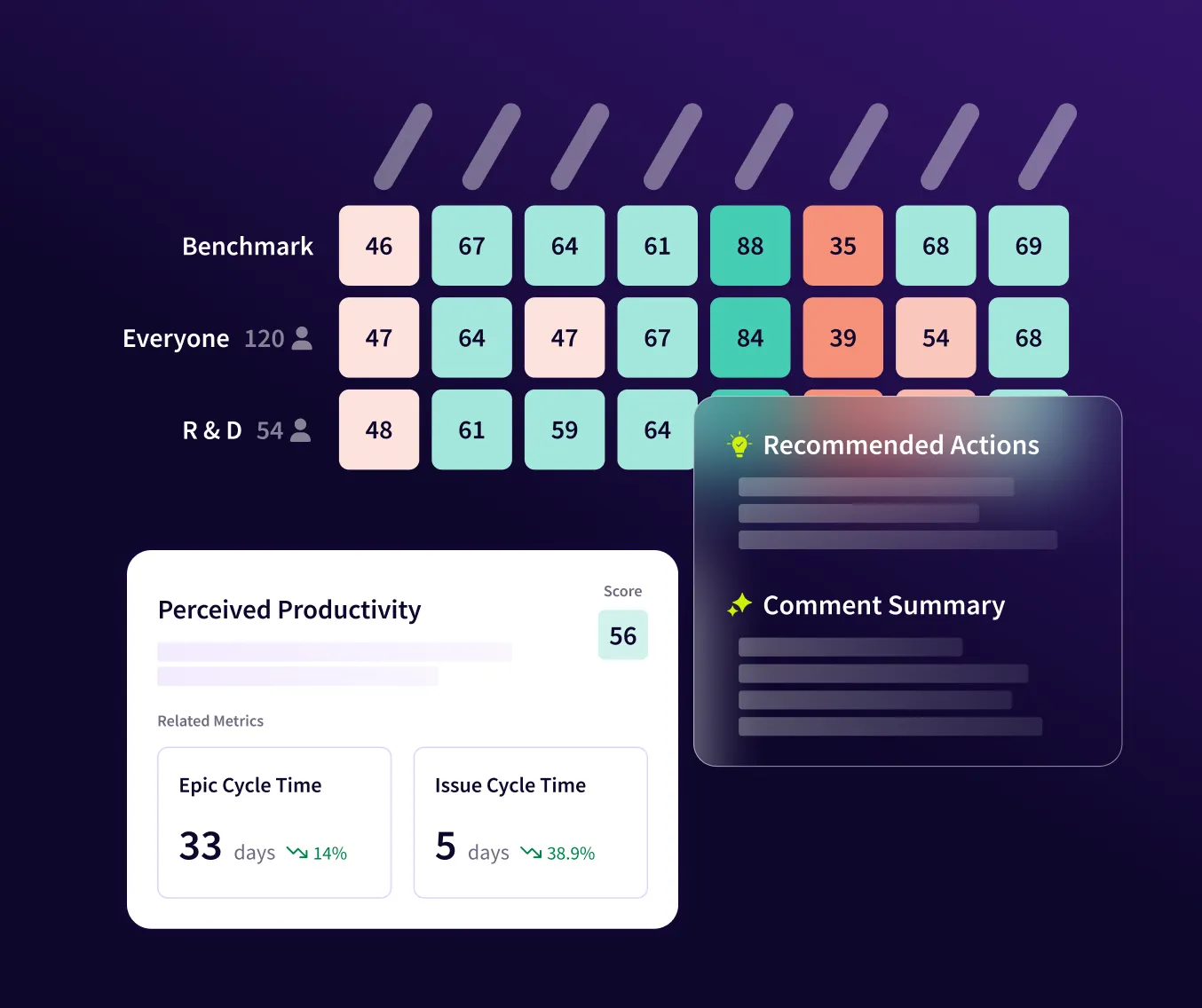

Connect Sentiment to Quantitative Signals

Capture the ‘why’ behind your metrics with research-backed DevEx surveys.

Jellyfish correlates qualitative feedback — like tool satisfaction or nitpicking in reviews — with quantitative data like PR cycle time, then surfaces Recommended Actions to improve engineering health.

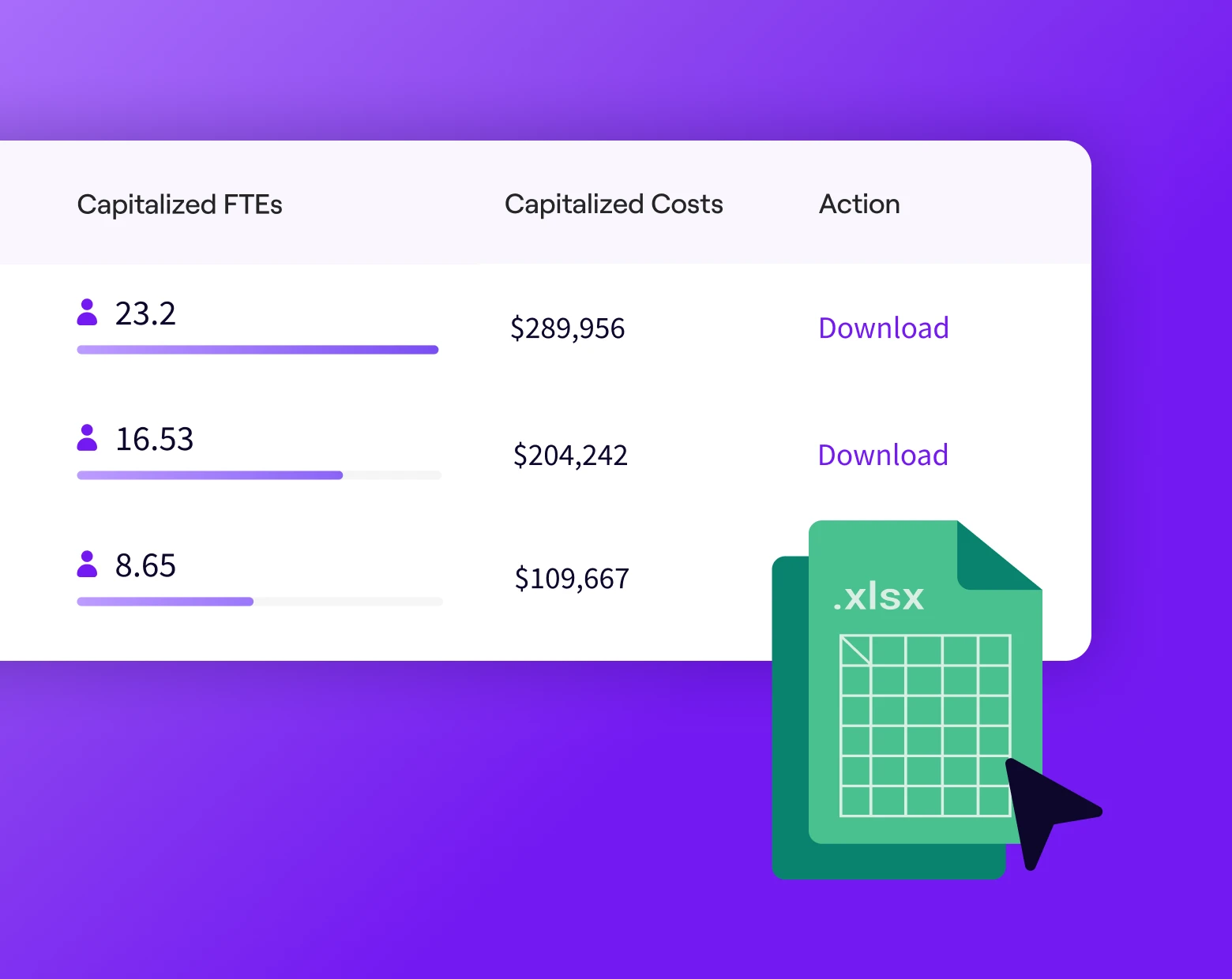

Automate Audit-Ready R&D Reporting

Eliminate manual developer time-tracking. Jellyfish categorizes work from Jira and Git signals to automatically generate audit-ready capitalization reports that maximize R&D tax credits and EBITDA.

Jellyfish is SOC-1 Type II compliant with a 100% audit pass rate — LinearB’s basic capitalization lacks SOC-1 compliance and requires manual setup.