Know If Cursor Is Delivering Results

Measure Cursor adoption, usage, and delivery impact across your engineering org so you can validate performance, optimize rollout, and prove ROI with real SDLC signals.

See Real Cursor Activity

Automatically detect who's using Cursor, how often, and in what workflows without manual reporting or surveys.

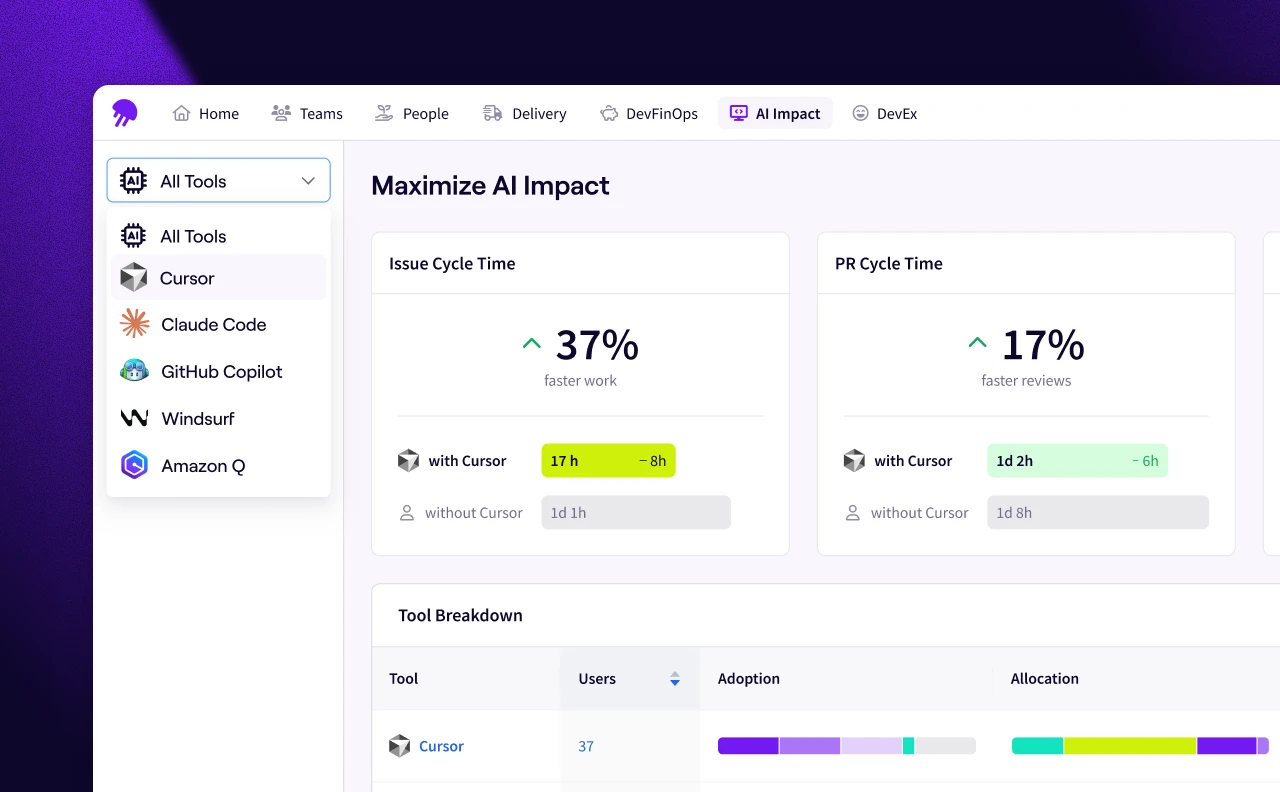

Link Cursor Usage to Outcomes

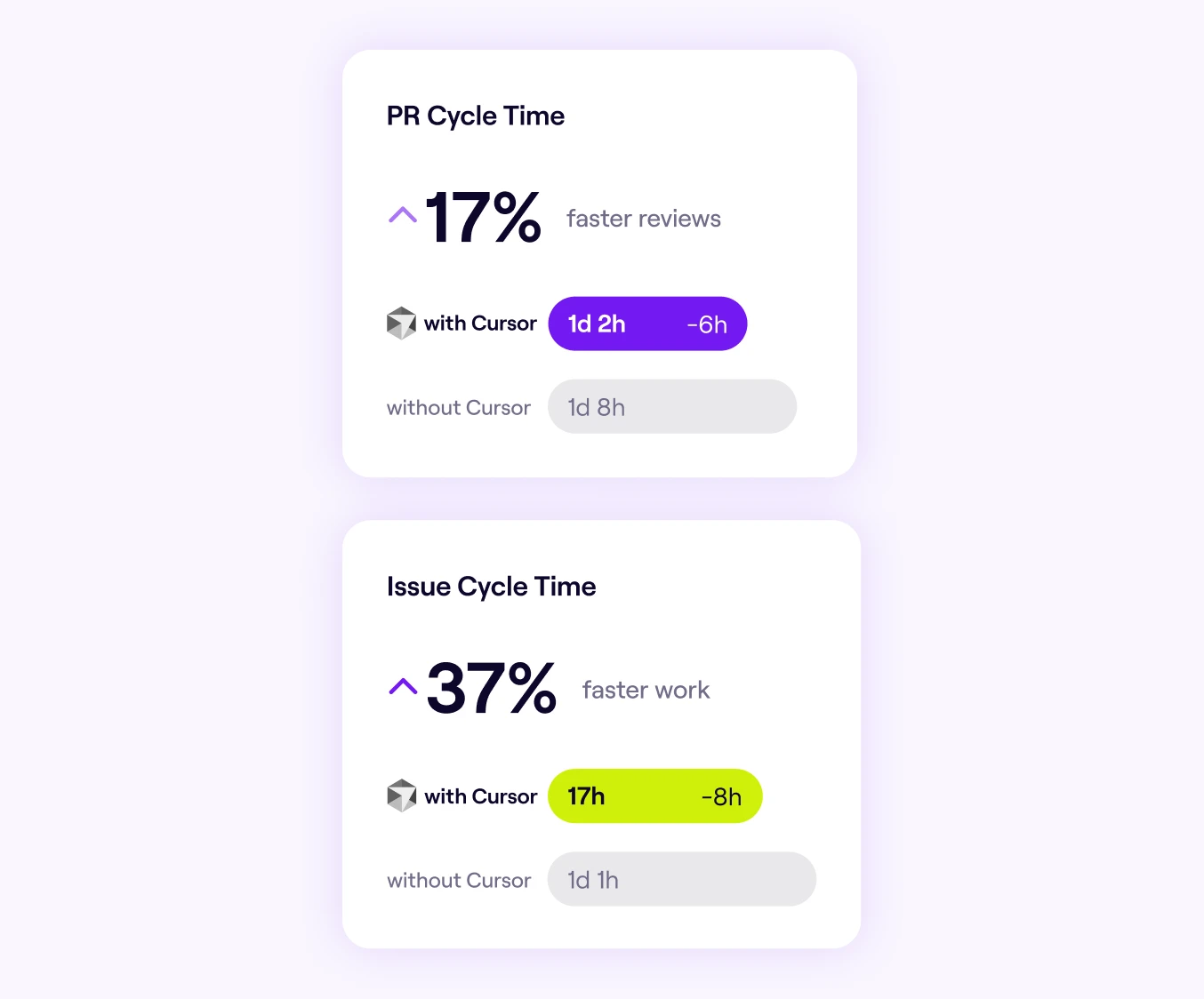

Connect Cursor adoption to delivery metrics like cycle time, throughput, and quality to measure what actually changed.

Prove Cursor ROI

Give leadership defensible ROI data built on SDLC signals, not vendor-reported activity counts.

Thanks to Jellyfish, we now have granular AI adoption data and the ability to benchmark against non-Cursor users. With clear signals on where productivity gains are happening, we can take the learnings from the higher-performing teams and share them across the org so everyone can benefit.

Ciaran McAuliffe

SVP of Software Development at Hootsuite

Everything You Need to Understand Cursor's Impact

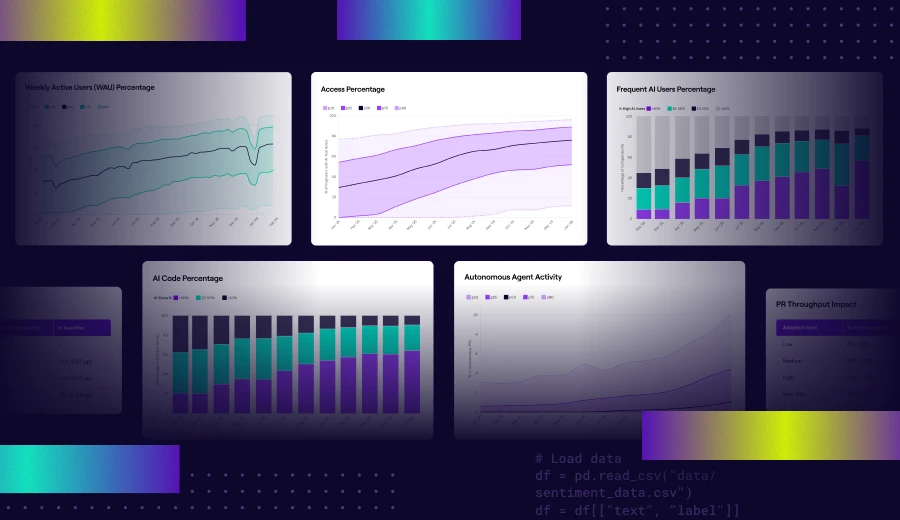

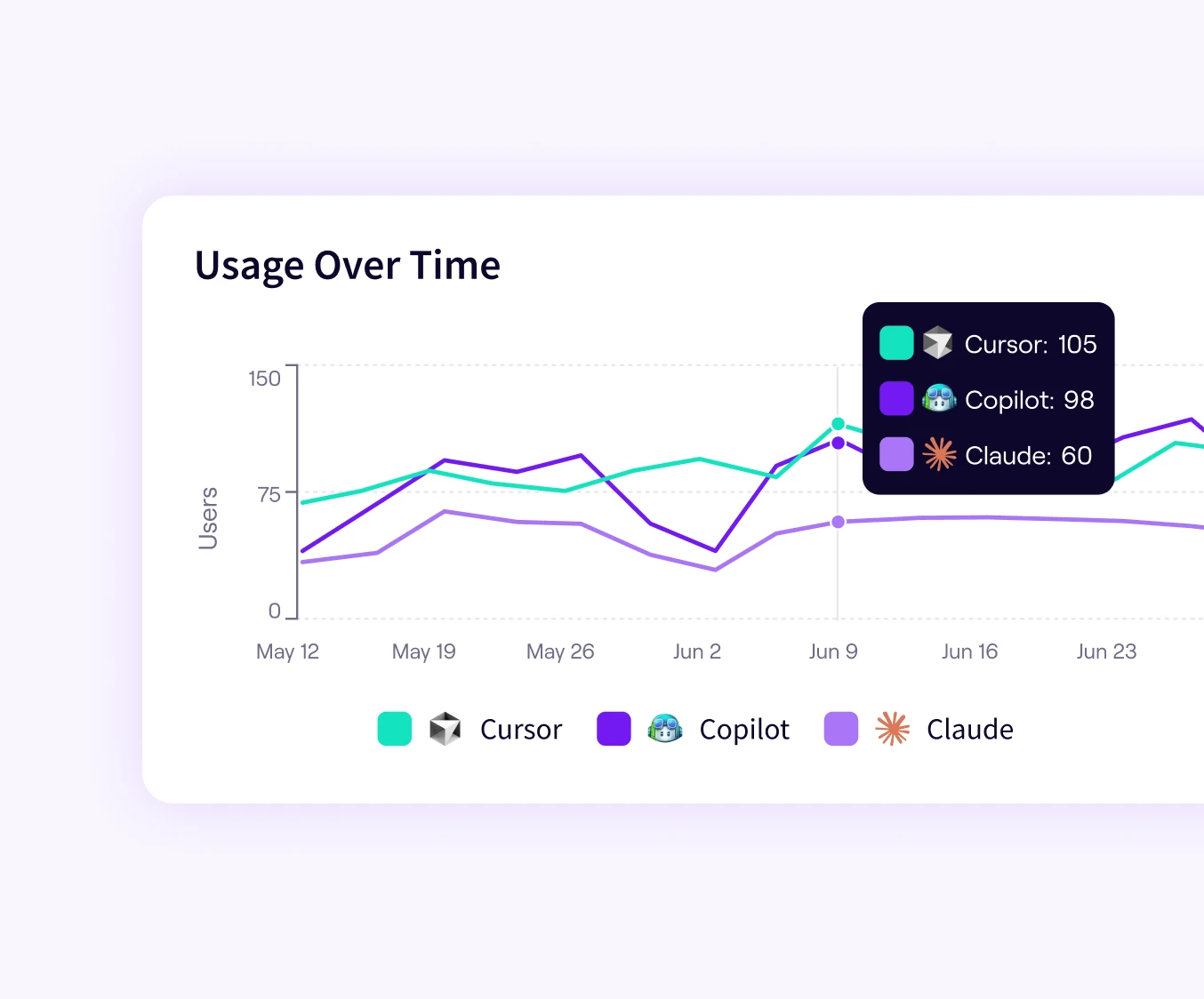

Cursor Adoption Over Time

See how Cursor usage evolves across your org week over week. Understand rollout momentum, spot stalls early, and know when to expand access.

Team-Level Breakdown

Drill into Cursor AI adoption by team, role, or segment to surface where usage is thriving and where enablement needs focus.

Before-and-After Delivery Metrics

Compare cycle time, PR velocity, and throughput for Cursor-influenced work. Quantify the performance shift with trusted, objective signals.

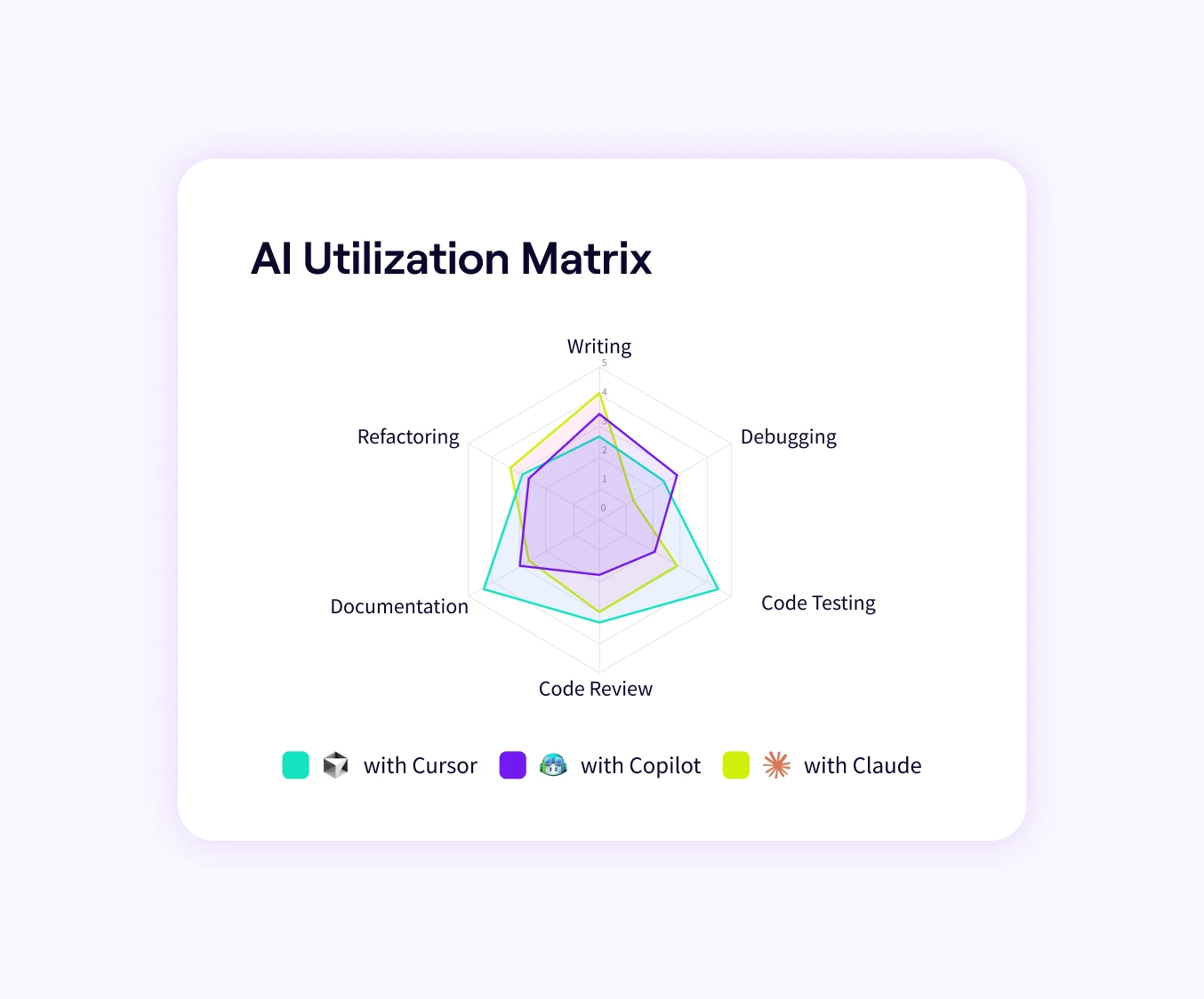

Cursor in Context

Evaluate Cursor usage alongside GitHub Copilot, Claude Code, and other AI tools in a single, vendor-neutral framework. See how it performs relative to the rest of your stack.

Integrate Your Full AI Tech Stack

Unify insights across assistants, review agents, and emerging systems without changing existing workflows.

AI Impact Research

In-depth frameworks and guidance to support adoption, measurement, and scaling of AI.